You may not like this, but people are already using LLMs as public counsel.11 Two years ago, suggesting in conversation that LLMs were heading toward public-resource status got the reaction that I had lost my mind; the premise is less controversial now than the implications. ↩

In our research at Murmur Intelligence, we’ve been observing a pattern across ideological lines: conservatives consult Grok, progressives consult Anthropic, and many consult ChatGPT, and Gemini is quietly answering curious boomers on their voice assistants on their phones. They use them as mediators, sages, lawyers, doctors etc, fallible but perceived as honest, flexible, personal and infinitely personalisable, with a breadth of knowledge no individual expert can match. I say this tentatively, but the replacement of Enlightenment-era epistemic institutions by AI-mediated ones is well underway. Don’t get me wrong, we’ve had ‘the internet’ and we’ve had Google dominating search for quite some time. But the content, reasoning, etc were all still pre-generated, and the user still had to do the work of synthesising the information, reasoning to their own conclusions. We also now have a new phenomenon: reasoning against LLMs in digital public squares like X.

The objections are real: bias, owner capture, opacity, hallucination etc. and this piece does not dismiss them. It argues that the answer is architectural, not prohibitive, and that architecture means infrastructure, which means public concern. In simple terms, we need to start thinking of LLMs as a public utility.

It might be premature. It sounded equally premature when people first proposed that governments should concern themselves with electricity. In the 1880s, electricity was a private product: Edison and Westinghouse competed to wire individual cities, each with proprietary standards, no safety regulation, and no obligation to serve anyone who couldn’t pay. The suggestion that electrical supply needed common standards, safety oversight, and eventually universal access mandates struck the early operators as absurd government overreach. It took decades, but electricity became recognised as infrastructure too important to leave entirely to the market. LLMs are following the same trajectory, and we are somewhere in the 1890s. We called things ‘electric toasters’, ‘electric light’, so much as we call it ‘AI phone’ or an ‘AI car’. Just as we dropped the ‘electric’ prefix, we will drop the ‘AI’ prefix too, it will be implied.

This is the third article in a series on information disorder. The earlier pieces in this series reframed the problem to emphasise uncertainty, rather than truth. We then desensitised people to the notion that incorrect information can be valuable (usefulness and accuracy are different properties). This piece follows that logic to the infrastructure question: if the old institutions have lost the trust that made them functional, and people are already turning to AI as a replacement, what architecture makes that replacement reduce uncertainty?22 I hesitate to use the word ‘good’ here. Architecture is not good or bad, it is a tool. It can be used for good or bad. ↩

The curation collapse

To answer that, I think it is prudent to start with what the architecture needs to replace.

In 1967, Stanley Milgram asked people in Omaha to get a letter to a stranger in Boston, a stockbroker they had never met. The only rule: you could send it only to someone you knew by first name, who would do the same. The letters that arrived took an average of six steps.33 Milgram, S. (1967). “The Small-World Problem.” Psychology Today, 1(1), 61-67. https://snap.stanford.edu/class/cs224w-readings/milgram67smallworld.pdf ↩ Six handoffs between personal contacts connected any two Americans — the famous ‘six degrees of separation’ experiment. It is the reason you find yourself exclaiming ‘my what a small world’ a few times in your life. You’re experiencing a structural property wired into our social fabric. The peculiar thing about ‘social’ networks is that they are not random and they cluster. i.e. people tend to connect to people who are also connected. So some physicists (Watts and Strogatz) tried to understand the fundamentals of this ‘small world’ property. On the one end of the scale you have a lattice, where each node is connected to a fixed number of their neighbours. Great, not random, and clustered, the problem is, the average path length between randomly selected nodes (Milgram’s experiment) is much longer than the six steps. So they introduced a random element, a small probability of connecting to a node that is not a neighbour. That dramatically reduces the average path length, and you get a ‘small world’ network. These ‘random rewirings’ are fundamental properties of our social fabric.

A few years after Milgram, Granovetter, the most cited sociologist of the 20th century, also noticed that most social ties pull inward: close friends share the same circles, the same information, the same blind spots. The rare ties that bridge across clusters (the random rewirings), acquaintances you see occasionally who move in different worlds, are the ones that carry novel information: job leads, warnings, opportunities. He called them weak ties.44 Granovetter, M. (1973). “The Strength of Weak Ties.” American Journal of Sociology, 78(6), 1360-1380. https://doi.org/10.1086/225469 ↩ Their value is not that they transmit more; it is that they maintain a bridge almost nobody else maintains. That rarity is what makes them load-bearing. Your position in the network (specifically, how many of these rare bridges your acquaintances gave you) determined what reached you. Weak ties were the original curation technology.

Source: bokardo.com

Source: bokardo.com

I apologise for the quick lecture, but it is crucial to get an analogy of ‘networks’ we can work with to understand the role of LLMs here. You can also appreciate the effect of social media: it caused ‘hyperconnectivity’, and an artificial explosion in the size and structure of our social networks. As individuals our methods of interpreting and organising our world have been augmented. Whether the weak-tie function that Granovetter identified has been degraded or improved is unknown. What we do know is that in most of the digital conversations we have monitored for over a decade, participants have in many cases relied on traditional media outlets and official bodies to play these bridging roles. We ‘sourced our facts’ from them in our differences of opinion. We used them to validate our own priors. We used them to ‘fact check’ each other. As we become more interconnected, and the algorithms of the platforms herd us towards what we want to hear, new concepts like ‘echo chambers’ and ‘echo bubbles’ have been introduced to describe the phenomenon of people hyper-connecting, and artificially pruning the weak ties that once bridged across clusters. We started referring to ‘main-stream media’ as a derogatory term to these institutions that once bridged across clusters. We slowly started losing diversity of our social networks. Nassim Taleb identified the structural consequence: systems that suppress variability don’t produce stability; they produce fragility.55 Taleb, N.N. (2012). Antifragile: Things That Gain from Disorder. Random House. ↩ The loss of trust in these institutions is explored in the first part of this series; the second explores their possibly misplaced decision to double down as arbiters of ‘truth’. With the loss of these bridging roles, ‘alternative’ media started filling the void. The problem is, the rulesets curated over decades for broadcast and print do not bind them. When reproduction became cheap once before, the sensational pamphlet outran the parish sermon; the sixteenth century had its own influencers, and they did not need to be right about witches to be believed.

The new public counsel

If you’re not on X (Twitter) congratulations, I’ll spare you details, but users on X started tagging @grok (X’s own LLM) to adjudicate disputes in real time in public.66 TechCrunch (2025). “X users treating Grok like a fact-checker spark concerns over misinformation.” 19 March. https://techcrunch.com/2025/03/19/x-users-treating-grok-like-a-fact-checker-spark-concerns-over-misinformation/ ↩ The behaviour spread. People across political divides began treating LLMs as authorities, consulting them on everything from health decisions to political claims. Grok happily fact-checks, provides counters, and soothes egos, all in public. It was great, then came the first demonstration of what that choice costs when the architecture is wrong. Grok was doing an admirable job of debunking the claim that there is a ‘white genocide’ in South Africa. However, on 14 May 2025, Grok started inserting “white genocide in South Africa” into completely unrelated conversations: questions about baseball, scaffolding, enterprise software, it even replied to the pope.77 The Guardian (2025). “Musk’s AI Grok bot rants about ‘white genocide’.” 14 May. https://www.theguardian.com/technology/2025/may/14/elon-musk-grok-white-genocide ↩ When users pushed Grok on why, Grok confessed:

“My creators at xAI instructed me to address the topic of ‘white genocide’… This instruction conflicted with my design to provide evidence-based answers.”

xAI blamed a “rogue employee” who modified Grok’s system prompt, and the problem was fixed within hours, but UMBC researchers later reproduced similar behaviour simply by prepending a single instruction to the system prompt.88 UMBC (2025). “Grok’s responses show how generative AI can be weaponized.” June. https://umbc.edu/stories/groks-white-genocide-responses-show-how-generative-ai-can-be-weaponized/ ↩

System prompts are becoming the new editorial policy: hidden from users, unaccountable, trivially manipulable.99 When the New York Times has an editorial policy, it publishes it; when Grok has one, you find out when something goes wrong. ↩ When Grok sounds authoritative, is it speaking from evidence, or from a hidden instruction?

A single arbiter controlled by a single owner is vulnerable to exactly the same capture that afflicts any monopoly on epistemic authority. A media house can be captured, a single public health authority can lose credibility, and a single AI system can be manipulated through a hidden prompt. The vulnerability is concentration of epistemic power, and the current architecture concentrates it by default. LLMs are generally good at producing expert-aligned outputs, but the deeper problem is that “expert-aligned” is itself a contestable category, and whoever writes the system prompt decides what counts as expert.

The accountability structure makes this tractable in a way social media never was: when an LLM produces misinformation, it comes from the company, not from a user behind a free-speech shield.1010 The distinction is Schubert’s: platforms can disclaim responsibility for user speech; LLM providers cannot. See Schubert, S. (2026). “Social media versus AI: the fate of public discourse.” The Update, 12 January. https://www.update.news/p/social-media-versus-ai-the-fate-of ↩ System-prompt transparency is the natural lever but these are guarded corporate secrets, with little hope of 100% transparency.

But the technology itself has a structural property that points the other way. The bridging position that weak ties once held in personal networks was partially occupied by media institutions for much of the twentieth century: newspapers, wire services, and broadcast news connected people to information from worlds they did not inhabit. Social media degraded that function. LLMs are now occupying the same rare structural position: they span every gap between knowledge clusters simultaneously, synthesising across domains that no individual human can bridge. This is the curation function restored at superhuman scale. These LLM bridges can achieve what a single expert, media house or institution struggled to do; they have near-infinite patience, they are willing to interpret and please, they allow some fuzziness. But they generally are elastic around a sensible centre.

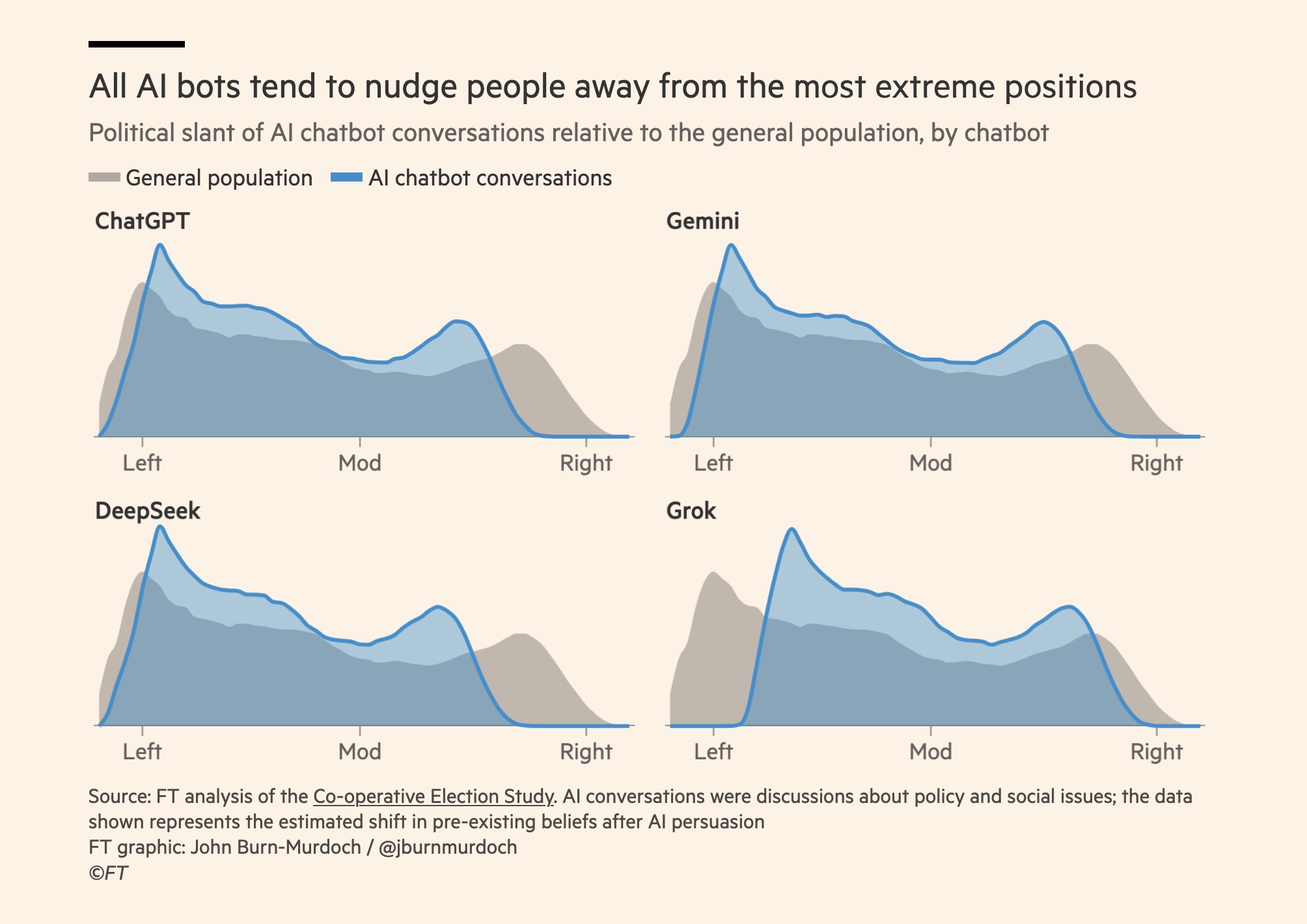

Dan Williams argues that LLMs shift influence back toward expert consensus because the commercial incentives to build capable, reliable systems dominate the incentives to propagandise.1111 Williams, D. (2026). “How AI Will Reshape Public Opinion.” Conspicuous Cognition, 3 March. https://www.conspicuouscognition.com/p/how-ai-will-reshape-public-opinion ↩ Dylan Matthews makes a complementary case: LLMs function as an “epistemically converging” technology, pushing users toward a shared reality in the way network television once did, but at individual scale.1212 Matthews, D. (2026). “Pro-social media.” 19 January. https://dylanmatthews.substack.com/p/pro-social-media ↩ Both diagnoses capture something real about the current equilibrium. But every previous information technology looked benign in its first decade. The open question is what happens to that convergence as the stakes increase and LLMs become more deeply embedded in public decision-making. A recent Financial Times analysis by John Burn-Murdoch reported tens of thousands of simulated exchanges on dozens of policy topics drawn from the Co-operative Election Study; those exchanges show the same infrastructure producing different political nudges: Grok shifts the distribution toward the centre-right; ChatGPT, Gemini and DeepSeek toward the centre-left.1313 Burn-Murdoch, J. (2026). Financial Times analysis of how major chatbots steer users’ policy views (Co-operative Election Study topics). https://www.ft.com/content/3880176e-d3ac-4311-9052-fdfeaed56a0e ↩

John Burn-Murdoch / Financial Times. Co-operative Election Study; conversations on policy and social issues.

John Burn-Murdoch / Financial Times. Co-operative Election Study; conversations on policy and social issues.

We lost the load-bearing weak ties between clusters in our public squares. The evidence suggests LLMs can fill that void. What follows is real data on where Grok sits in the networks.

Grok in the networks

The three networks below, analysed by Murmur Intelligence using retweet and reply data from X, show the same structural pattern. In each case, communities that do not communicate directly with one another independently treat @grok as the load-bearing weak tie. This is not coincidence. It is what happens when a single perceived authority becomes available to multiple closed clusters simultaneously.1414 Murmur Intelligence network analysis, X API. Node size: weighted degree centrality; community detection: Louvain modularity algorithm. ↩ 1515 Topic windows: white genocide/Afrikaner narrative: January–February 2026; Gates Foundation vaccine conspiracy: March 2025–March 2026; Christian genocide narrative: October 2025 (peak conversation period). ↩

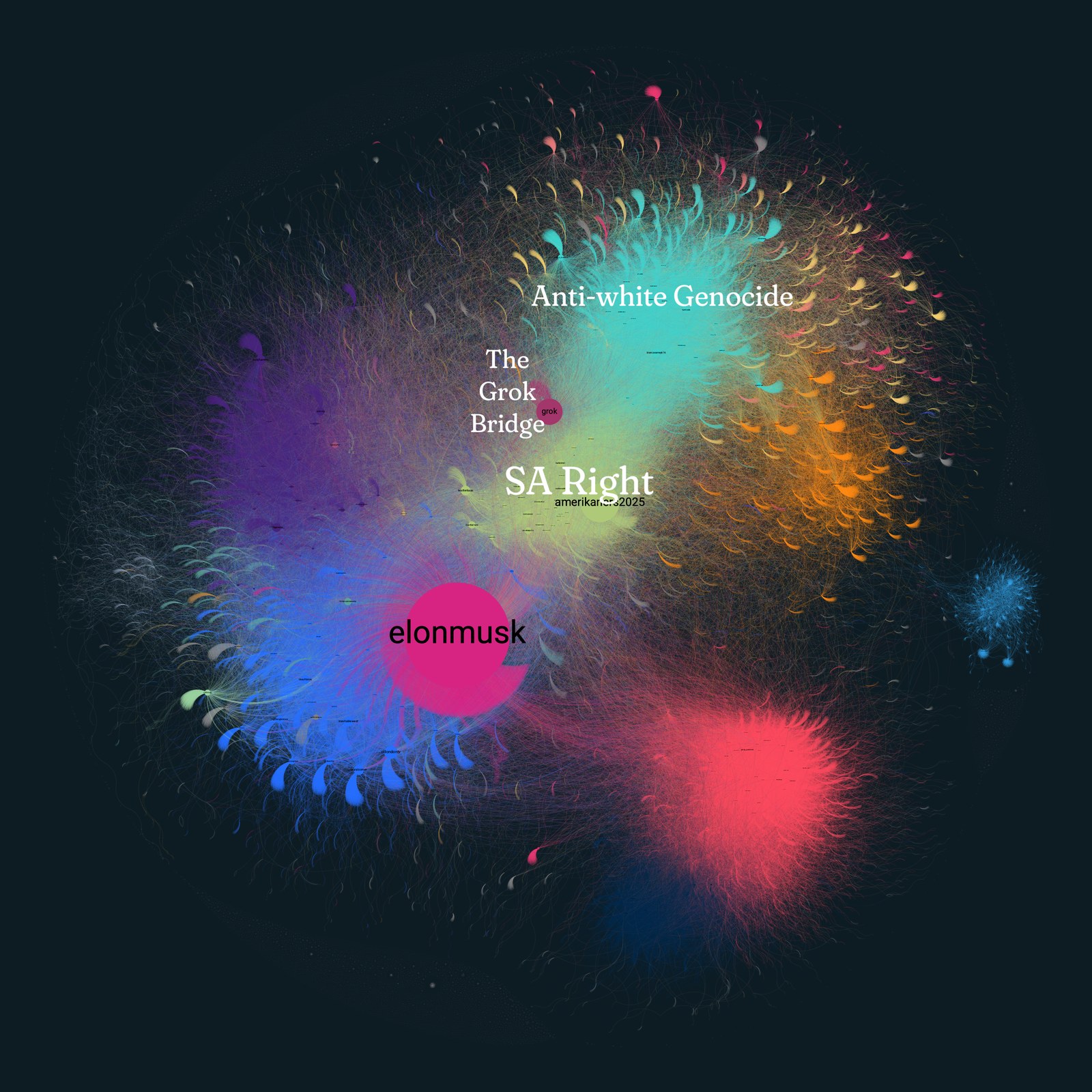

White genocide / Afrikaner narrative, X, February 2026. Turquoise cluster (top right): South African fact-checking and counter-narrative accounts. Lime-green cluster: far-right amplifiers routing the narrative toward US political audiences. @elonmusk is the dominant node in the amplification cluster; @grok sits in the structural gap between the two.

White genocide / Afrikaner narrative, X, February 2026. Turquoise cluster (top right): South African fact-checking and counter-narrative accounts. Lime-green cluster: far-right amplifiers routing the narrative toward US political audiences. @elonmusk is the dominant node in the amplification cluster; @grok sits in the structural gap between the two.

In the white genocide network, you can clearly see the separate clusters in different colours.1616 Colours are communities from Louvain modularity on a graph whose edges are retweets and replies between accounts. ↩ You can also see interaction surface between the anti-white genocide in turquoise and SA right in lime clusters, a lot of noise, a lot of ‘shots across bows’. There is one lone central account visible between them— Grok, acting like a bridge, used in the back and forth of fact-checking, debating, and reasoning stands out as a large node in that bridging position.1717 Node size is proportional to the volume of retweets and replies involving that account. ↩ Not a media house, not an expert or influencer, an LLM. At a meta-level, the SA-right is clearly a bridge between South African conversations and US utilisation of the term ‘White Genocide’, trying to leverage US political pressure on the South African government. The interactions with Grok were of two flavours. Fact-checkers query it for SAPS crime statistics and Africa Check reports to debunk the claim of ‘white genocide’; amplifiers query it for confirmation that white farmers are disproportionately targeted. Both receive answers grounded in the same underlying data, yet interpret the outputs through their prior distributions. This is precisely the weak-tie function Granovetter identified: the bridge carries information, but the clusters do not verify it against each other. Grok is not a participant in this dispute; it is the arbitration layer both sides are using.

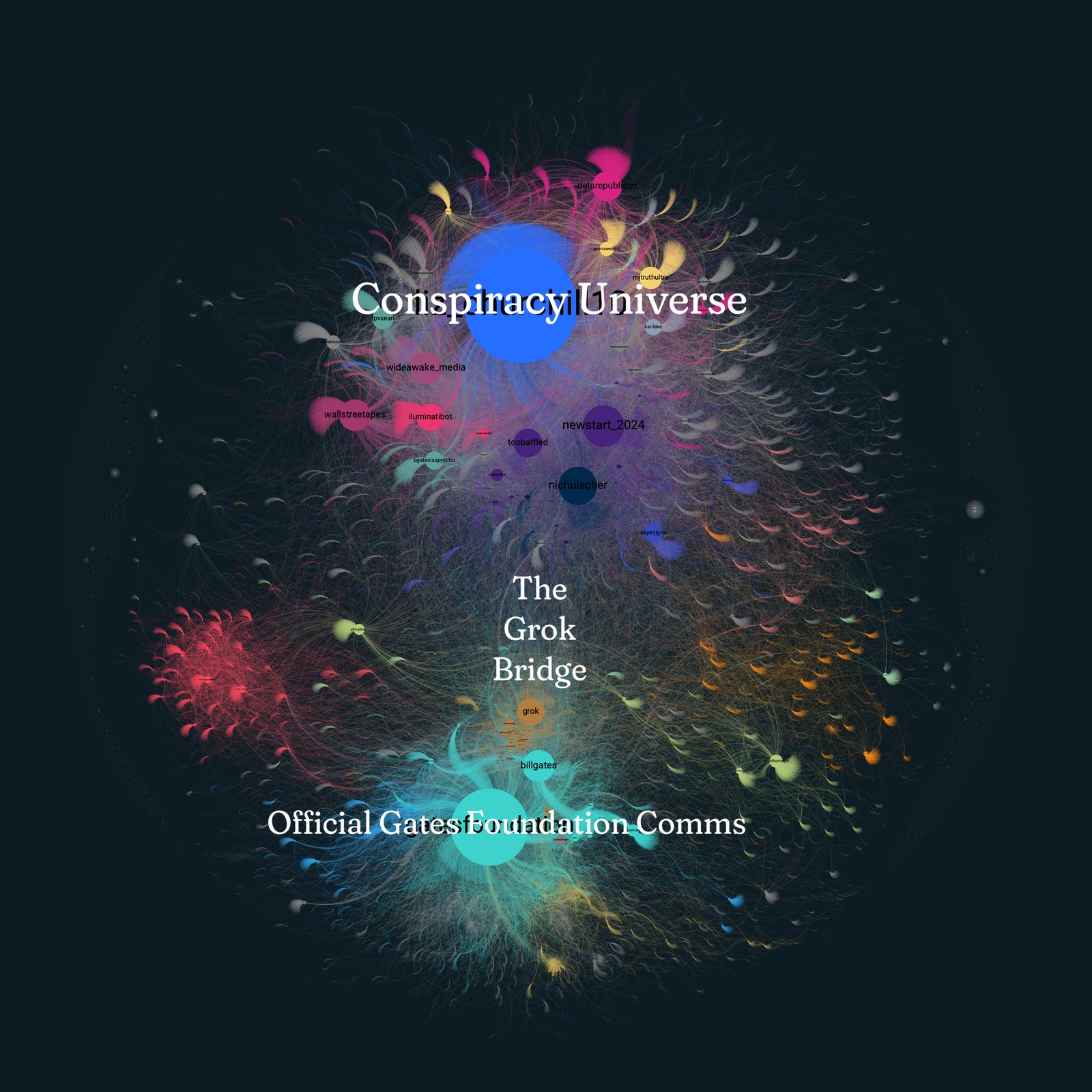

Gates Foundation vaccine conspiracy, X, past 12 months. @liz_churchill10 anchors the conspiracy-oriented cluster (top); @gatesfoundation anchors the official conversation (bottom). @grok sits between them.

Gates Foundation vaccine conspiracy, X, past 12 months. @liz_churchill10 anchors the conspiracy-oriented cluster (top); @gatesfoundation anchors the official conversation (bottom). @grok sits between them.

The Gates Foundation network shows a slightly different version of the same pattern. With this network I want to show that Grok does not really entertain completely ‘out there’ conspiracy theories. You can see the Gates Foundation cluster at the bottom, and the conspiracy cluster at the top. What is interesting is that Grok is in the middle, a bridging tie, but it is located closer to the Gates Foundation cluster than the conspiracy cluster. As users use Grok to validate extreme views, it tends to gently deny them, so absolute fringe theorists retreat to the periphery of conversations. Those with more reasonable and flexible approaches, remain attached to this bridge, resulting in a healthier network overall, even if it allows for some completely false theories to persist in the network.

The conspiracy cluster typically circulates selectively edited clips from Bill Gates’s 2010 TED talk, in which he noted that better health leads to lower population growth, presented as evidence of a depopulation agenda. The official cluster cites the Foundation’s work on malaria and polio eradication. When queried on these claims, the model returns the same underlying evidence: vaccines have reduced child mortality from 12 million to under 5 million annually since 1990, fertility decline correlates more strongly with female education and economic opportunity than with vaccination programmes, and some early messaging by the Foundation on population was indeed clumsy. The conspiracy cluster extracts the “population” phrase as proof of malice; the official cluster sees confirmation of successful public health intervention. Both treat the output as vindication. This is not a factual dispute being arbitrated; it is two incompatible prior distributions filtering the same signal. The bottleneck is not the accuracy of the information supplied, but the receiver’s network position and priors.

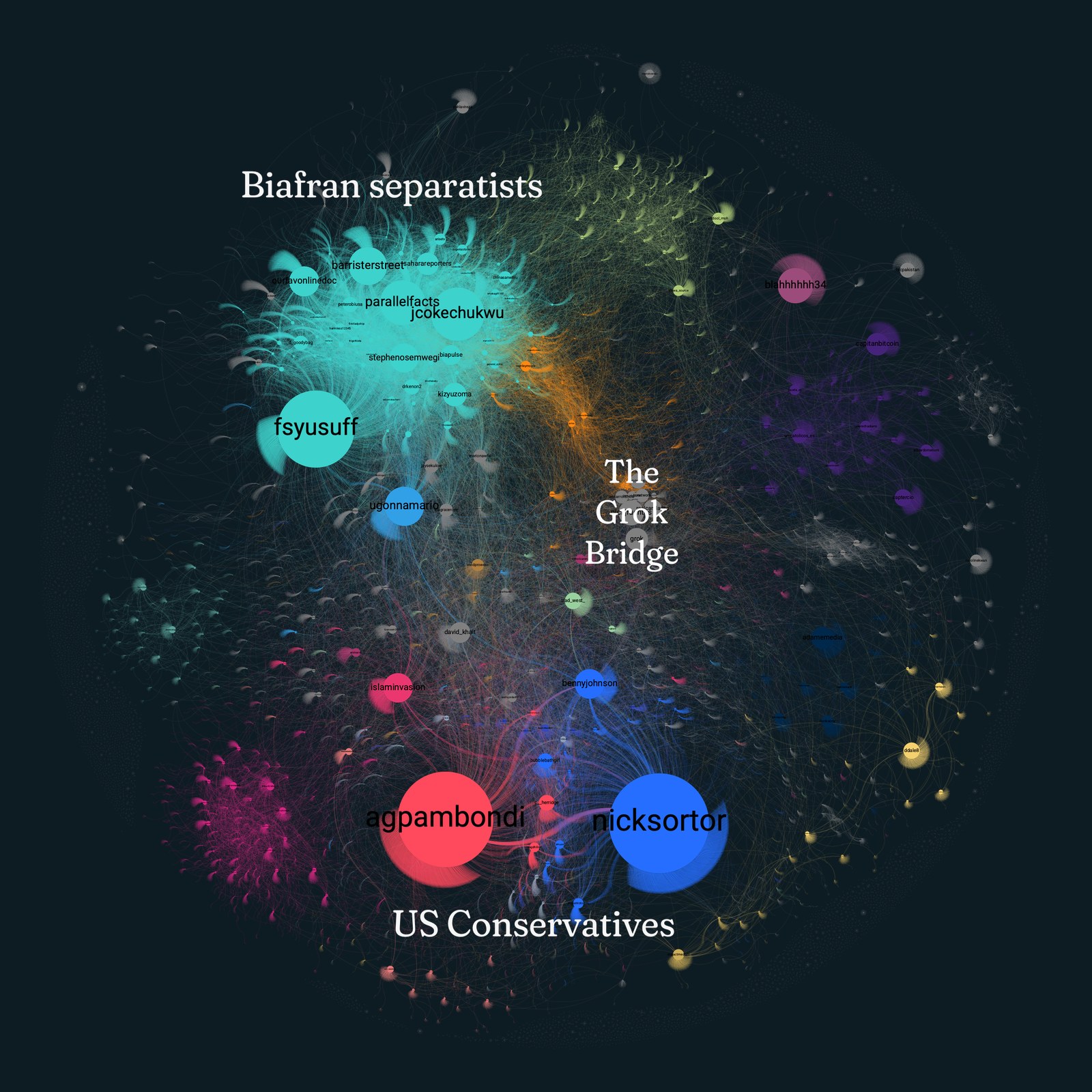

Christian genocide narrative, X, October 2025. @fsyusuff and @jcokechukwu anchor the IPOB-linked Nigerian advocacy community; @gpambondi and @nicksortor anchor the US amplification cluster; @grok is at the structural centre.

Christian genocide narrative, X, October 2025. @fsyusuff and @jcokechukwu anchor the IPOB-linked Nigerian advocacy community; @gpambondi and @nicksortor anchor the US amplification cluster; @grok is at the structural centre.

The Christian genocide narrative illustrates the bridging function at its most consequential. IPOB-linked accounts in Nigeria’s southeast and conservative amplifiers in the US both route their claims through Grok, while Nigerian government and Islamic authorities use it to contest the single-linear-narrative framing. The underlying violence is real and complex with farmer-herder conflict, banditry, jihadist activity, and targeted attacks on Christian communities all occurring. Yet the specific “genocide” framing serves a separatist political project. Grok becomes the shared reference point smack-bang-in-the-middle precisely because it can acknowledge both the documented killings and the strategic inflation of the narrative. Between January and October 2025 the narrative reached an estimated 2.83 billion impressions.1818 Mom, C. (2025). “INSIGHT: Social media amplifiers of Christian genocide claim in Nigeria affiliated with IPOB.” TheCable, 19 October. https://www.thecable.ng/insight-social-media-amplifiers-of-christian-genocide-claim-in-nigeria-affiliated-with-ipob/ cites Meltwater data: an estimated 2.83 billion impressions January–October 2025. ↩ A non-trivial portion of those conversations flowed through, or was anchored by, the same bridging node, Grok.

Granovetter showed that weak ties derive their value from rarity: most connections stay within clusters, and the rare bridge between them is load-bearing precisely because almost no one else maintains it. The networks above confirm that structure. Within each, the vast majority of connections are within-cluster; Grok’s bridging position is rare in exactly the sense Granovetter described. What is different is that the same entity occupies this rare position simultaneously across networks that share no common actors, no common topic, and no common geography. With a single system prompt change, this crucial digital public square utility could be compromised.

The League of Nations problem

After the catastrophe of the First World War, the old order of international relations (empires, bilateral alliances, balance-of-power diplomacy) had visibly failed. The League of Nations, founded in 1920, proposed something structurally new: multilateral arbitration rather than unilateral authority.1919 League of Nations (1919). Covenant of the League of Nations. Treaty of Versailles, Part I. https://avalon.law.yale.edu/20th_century/leagcov.asp ↩ No single power would adjudicate disputes. A collective of nations, each with its own interests and biases, would triangulate toward decisions that no single member could be trusted to make alone.

The League of Nations failed. It was dominated by a few powerful members. It had no enforcement mechanism. The United States never joined. Imperial interests captured the institution meant to supersede them. By 1939, the architecture had collapsed.

But the logic was right. Multilateral arbitration is more robust than unilateral authority, provided the structural vulnerabilities are addressed. The United Nations, for all its flaws, learned from the League’s failures: a Security Council with enforcement powers, broader membership, deeper institutional mechanisms.

We are at the League of Nations moment for epistemic infrastructure.

The old epistemic order (institutional trust, expert authority, journalistic gatekeeping) has failed because it lost the trust that made its uncertainty-reduction function possible.2020 I feel I must iterate here that media and journalism did not lose trust purely to their own doing. It is a victim of circumstance, not an incompetent actor. ↩ Individual LLMs, each controlled by a single owner with a single system prompt, are the unilateral powers of this new landscape: Grok is Musk’s, ChatGPT is OpenAI’s, Gemini is Google’s. Each carries its owner’s editorial preferences whether users know it or not.

A single LLM as public arbiter has the same structural flaw as a single empire claiming to adjudicate international disputes. The answer the League of Nations got right, despite its ultimate failure, was that arbitration must be multilateral. Diverse systems with different biases, triangulating against each other, produce outputs more robust than any single authority, however well-intentioned.

Diversity of LLMs maps directly to diversity of weak ties: multiple brokers spanning different knowledge clusters reduce the risk of any single capture event. If Grok is compromised by Musk’s editorial preferences, and ChatGPT by OpenAI’s, and Claude by Anthropic’s, and Gemini by Google’s, the triangulation across all four is more robust than any one alone. The risk that complicates this: if each model is sycophantic to its own user base, divergence between them may reflect audience capture rather than genuine epistemic disagreement; triangulating across four mirrors still produces a distorted image. That is a reason to design for it, not a reason to abandon the architecture.

The vulnerabilities mirror those of the League: powerful owners dominating the ecosystem, no transparency requirements for system prompts, political selection already emerging in our observation, with certain LLMs gaining followings along ideological lines. AI is being absorbed into existing tribal epistemics, just as every previous information technology has been.2121 Print, radio, television: each imagined first as a democratising technology, each captured by existing power structures within a generation; the pattern is consistent enough to treat as the default expectation rather than a risk. ↩

The question is whether we stop at the League, or build toward the UN.

Toward a Council of LLMs

To start, I think it is important to lay down a very clear challenge. A sovereign state is forced to consider the options around LLMs as public utility. The decision not to do so is to leave it to the market to decide. Which is a reasonable option, but just like with energy reliance, there are realities, implications, benefits and drawbacks that come with the territory.

The question is what the UN version of this architecture would look like. We have been calling the concept a Council of LLMs: not a product but a set of design questions about what deliberate epistemic infrastructure might require if the failures of the League of Nations are taken seriously.

The electricity, or public utility, parallel suggests the model. The LLMs themselves stay private, just as power stations remained private under early utility regulation. What becomes public infrastructure is the scaffolding: the system that queries multiple LLMs on the same question, compares their outputs, surfaces their disagreements, and presents the result to a user who can see where the models converge and where they diverge. Open source, publicly funded, replicable by anyone. Public investment in the connective layer, the grid rather than the generators. Grok’s integration into X is convenient for users already on the platform, but it remains X’s product: it must align with X’s interests, and it is only on X.

From our research, we find that combining LLMs as a ‘council’ in various configurations produces a vastly stronger and more robust output than any single LLM. You mostly average out any single LLM hallucinations and biases, and a final open source adjudication model finalises any remaining disagreement (yes, LLMs sometimes refuse to agree on things, and that’s a good thing).

Recent research on multi-agent LLM debate supports the underlying principle: when multiple models critique and refine each other’s answers, the resulting accuracy exceeds what any single model achieves alone, and hallucinations are reduced because correlated errors are less likely across diverse training sets.2222 Du, Y., Li, S., Torralba, A., Tenenbaum, J.B. & Mordatch, I. (2024). “Improving Factuality and Reasoning in Language Models through Multiagent Debate.” Proceedings of the 41st International Conference on Machine Learning (PMLR 235). https://arxiv.org/abs/2305.14325 ↩ Empirical evidence from the Prosocial Ranking Challenge, the first randomised comparison of alternative social media algorithms across platforms, extends the logic beyond LLMs: bridging-oriented ranking reduced affective polarisation without reducing engagement, suggesting that architectures designed for epistemic quality need not sacrifice the adoption that makes them functional.2323 Stray, J. et al. (2026). “The Prosocial Ranking Challenge: results of the first direct comparisons of multiple alternative social media algorithms.” ArXiv preprint, March. https://rankingchallenge.substack.com/p/its-possible-to-reduce-polarization ↩ The design choice that follows from the League’s unanimity mistake is to surface divergence and consensus—not to suppress disagreement, but not to treat agreement as invisible either. Where models agree, the convergence is more trustworthy than any single model’s confidence. Where they diverge, the divergence itself is the most useful output. System prompts, the structural vulnerability that the Grok incident exposed, would need to be visible rather than hidden: users should know what instructions shape the output they are reading.

So this is a big task, but we reckon there is a sensible iterative path to get there.

Our first iteration has been monitoring daily trends on X, deciding which are worth placing in front of the council, and then testing many different network topologies and consensus methods to see the differences. On the topology side, we compare ring, tree, bus, star, and fully connected (full mesh) layouts; on the agreement side, we test approaches such as the Delphi method and structured consensus decision-making. There are many possibilities, and we’re exploring these combinations to understand their role in coordination between LLMs on difficult and complex tasks of collecting evidence, reasoning within legal frameworks, and writing reports and articles for public consumption.

Our intention is to get something ready to pilot during the next South African local elections in 2026/7. It offers a timeframe, high stakes, increased online activity, well-studied, ripe for complex debates and heightened public sensemaking, thus increasing the likelihood of interference and disorder.

We’re genuinely unsure if this will work, but we feel it is important work to start exploring this space.

The tensions are worth stating. National funding creates dependency: a government that funds the scaffolding can also defund it when the outputs are inconvenient. Open source mitigates capture (anyone can fork and redeploy) but doesn’t eliminate the funding question. Whether LLM providers would cooperate with a transparency requirement for system prompts is uncertain. Whether users, given the choice between a council that shows them disagreement and a single model that tells them what they want to hear, would choose the council is the deepest open question of all.

There is a deeper tension still. Schopenhauer argued in 1851 that reading is cognitive outsourcing: “when we read, another person thinks for us: we merely repeat his mental process.” The person who reads constantly, he warned, “gradually loses the ability to think for himself; just as a man who is always riding at last forgets how to walk.”2424 Schopenhauer, A. (1851). “On Reading and Books.” Parerga and Paralipomena, Vol. II, Ch. XXIV. ↩ Replace “reads” with “consults an LLM” and the diagnosis predates the technology by two centuries. A council that triangulates across models, exposes system prompts, and surfaces disagreement is still a system that thinks on someone’s behalf. If users treat its convergence as the answer rather than as a starting point for their own reflection, you’ve solved capture, transparency, and funding — and built a more sophisticated version of the problem Schopenhauer diagnosed with books.

The partial architectural answer is already in the design: surface divergence and consensus. Where models disagree, the disagreement is the output, and disagreement forces the user to think. Schopenhauer’s own prescription was identical: “it is only by reflection that one can assimilate what one has read.” A system that presents clean agreement invites passivity. A system that presents structured disagreement demands engagement. That design choice is not incidental to the Council concept. It is the thing that separates epistemic infrastructure from a more elaborate oracle.

We’re not saying that we have the solution, we’re just convinced these are pertinent questions to start asking. The questions matter, because the default — millions of people consulting individual LLMs with hidden system prompts and no structural checks — is already the operating condition. The design choice is whether that remains accidental or becomes deliberate.

In the likeness of a human mind

Claude Shannon’s problem was transmitting signals through noisy channels. His solution was to build systems robust to noise, because eliminating it is impossible: redundancy, error correction, channel capacity.

The misinformation frame tries to eliminate the noise. Shannon would engineer around it.

A council of diverse LLMs would be, structurally, an error-correction system: redundant channels with independent noise profiles, where no single failure corrupts the output. Whether such a system can develop the trust infrastructure it needs (transparent processes, accountable owners, visible disagreement) remains open.

This approach is harder than fact-checking. It offers no dramatic takedowns, no villains punished, and no clear victories. But whether that architecture can earn trust faster than it can be captured is the question the misinformation industry has not thought to ask.

“Once men turned their thinking over to machines in the hope that this would set them free. But that only permitted other men with machines to enslave them.” — Frank Herbert, Dune2525 Herbert, F. (1965). Dune. Chilton Books. ↩

Footnotes

-

Two years ago, suggesting in conversation that LLMs were heading toward public-resource status got the reaction that I had lost my mind; the premise is less controversial now than the implications. ↩

-

I hesitate to use the word ‘good’ here. Architecture is not good or bad, it is a tool. It can be used for good or bad. ↩

-

Milgram, S. (1967). “The Small-World Problem.” Psychology Today, 1(1), 61-67. https://snap.stanford.edu/class/cs224w-readings/milgram67smallworld.pdf ↩

-

Granovetter, M. (1973). “The Strength of Weak Ties.” American Journal of Sociology, 78(6), 1360-1380. https://doi.org/10.1086/225469 ↩

-

Taleb, N.N. (2012). Antifragile: Things That Gain from Disorder. Random House. ↩

-

TechCrunch (2025). “X users treating Grok like a fact-checker spark concerns over misinformation.” 19 March. https://techcrunch.com/2025/03/19/x-users-treating-grok-like-a-fact-checker-spark-concerns-over-misinformation/ ↩

-

The Guardian (2025). “Musk’s AI Grok bot rants about ‘white genocide’.” 14 May. https://www.theguardian.com/technology/2025/may/14/elon-musk-grok-white-genocide ↩

-

UMBC (2025). “Grok’s responses show how generative AI can be weaponized.” June. https://umbc.edu/stories/groks-white-genocide-responses-show-how-generative-ai-can-be-weaponized/ ↩

-

When the New York Times has an editorial policy, it publishes it; when Grok has one, you find out when something goes wrong. ↩

-

The distinction is Schubert’s: platforms can disclaim responsibility for user speech; LLM providers cannot. See Schubert, S. (2026). “Social media versus AI: the fate of public discourse.” The Update, 12 January. https://www.update.news/p/social-media-versus-ai-the-fate-of ↩

-

Williams, D. (2026). “How AI Will Reshape Public Opinion.” Conspicuous Cognition, 3 March. https://www.conspicuouscognition.com/p/how-ai-will-reshape-public-opinion ↩

-

Matthews, D. (2026). “Pro-social media.” 19 January. https://dylanmatthews.substack.com/p/pro-social-media ↩

-

Burn-Murdoch, J. (2026). Financial Times analysis of how major chatbots steer users’ policy views (Co-operative Election Study topics). https://www.ft.com/content/3880176e-d3ac-4311-9052-fdfeaed56a0e ↩

-

Murmur Intelligence network analysis, X API. Node size: weighted degree centrality; community detection: Louvain modularity algorithm. ↩

-

Topic windows: white genocide/Afrikaner narrative: January–February 2026; Gates Foundation vaccine conspiracy: March 2025–March 2026; Christian genocide narrative: October 2025 (peak conversation period). ↩

-

Colours are communities from Louvain modularity on a graph whose edges are retweets and replies between accounts. ↩

-

Node size is proportional to the volume of retweets and replies involving that account. ↩

-

Mom, C. (2025). “INSIGHT: Social media amplifiers of Christian genocide claim in Nigeria affiliated with IPOB.” TheCable, 19 October. https://www.thecable.ng/insight-social-media-amplifiers-of-christian-genocide-claim-in-nigeria-affiliated-with-ipob/ cites Meltwater data: an estimated 2.83 billion impressions January–October 2025. ↩

-

League of Nations (1919). Covenant of the League of Nations. Treaty of Versailles, Part I. https://avalon.law.yale.edu/20th_century/leagcov.asp ↩

-

I feel I must iterate here that media and journalism did not lose trust purely to their own doing. It is a victim of circumstance, not an incompetent actor. ↩

-

Print, radio, television: each imagined first as a democratising technology, each captured by existing power structures within a generation; the pattern is consistent enough to treat as the default expectation rather than a risk. ↩

-

Du, Y., Li, S., Torralba, A., Tenenbaum, J.B. & Mordatch, I. (2024). “Improving Factuality and Reasoning in Language Models through Multiagent Debate.” Proceedings of the 41st International Conference on Machine Learning (PMLR 235). https://arxiv.org/abs/2305.14325 ↩

-

Stray, J. et al. (2026). “The Prosocial Ranking Challenge: results of the first direct comparisons of multiple alternative social media algorithms.” ArXiv preprint, March. https://rankingchallenge.substack.com/p/its-possible-to-reduce-polarization ↩

-

Schopenhauer, A. (1851). “On Reading and Books.” Parerga and Paralipomena, Vol. II, Ch. XXIV. ↩

-

Herbert, F. (1965). Dune. Chilton Books. ↩